H100

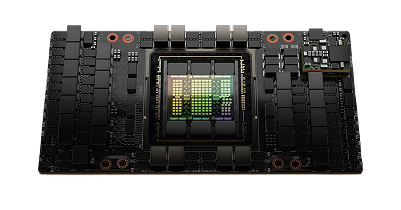

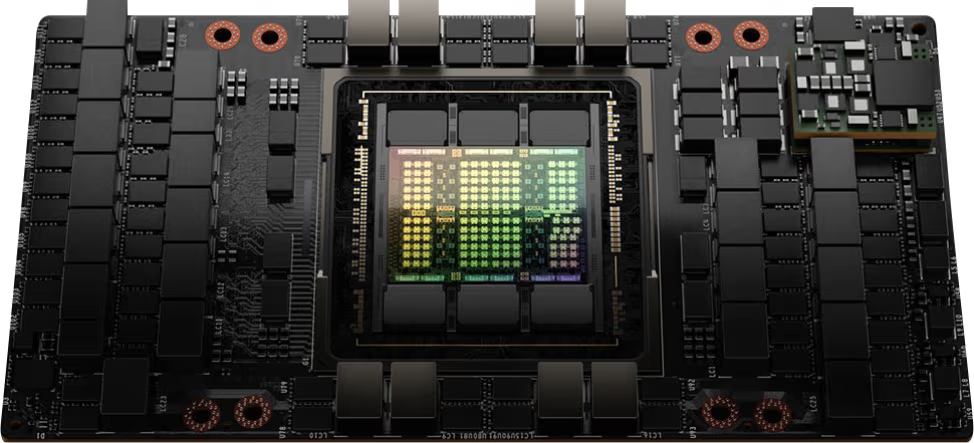

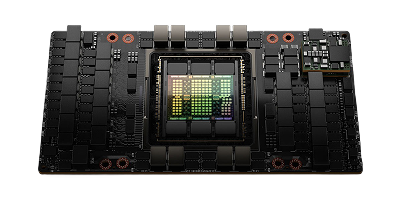

The NVIDIA H100 is an ideal choice for large-scale AI applications. It uses the NVIDIA Hopper architecture that combines advanced features and capabilities, accelerating AI training and inference on larger models.

H100

Infrastructure and technology partners

Perfect for a range of workloads

Deploying AI based workloads on CUDO Compute is easy and cost-effective. Follow our AI related tutorials.

Deploying rendering based workloads on CUDO Compute is easy and cost-effective

From video editing to image generation, virtualization is ideal for your content creation needs.

Purpose-built H100 clusters, designed and managed by CUDO

Deployed across 16 ISO-certified data centres

From 8 to 1,000+ GPUs in a single deployment

NVIDIA Quantum-X800 InfiniBand or Spectrum-X Ethernet networking

Expert rack-level design, installation, and benchmarking before handoff

24/7 monitoring, management, and engineering support

Compatible with Slurm, Kubernetes, and NVIDIA Base Command

Available at the most cost-effective pricing

Launch your AI products faster with on-demand GPUs and a global network of data center partners

Bare metal

Powered by renewable energy

No noisy neighbors

SpectrumX local networking

300Gbps external connectivity

NVMe SSD storage

Enterprise

Powerful GPU clusters

Scalable data center colocation

Large quantities of GPUs and hardware

Optimize to your requirements

Expert installation

Scale as your demand grows

Specifications

Browse specifications for the NVIDIA H100 GPU

Starting from

Contact us for pricing

Architecture

NVIDIA Hopper

GPU

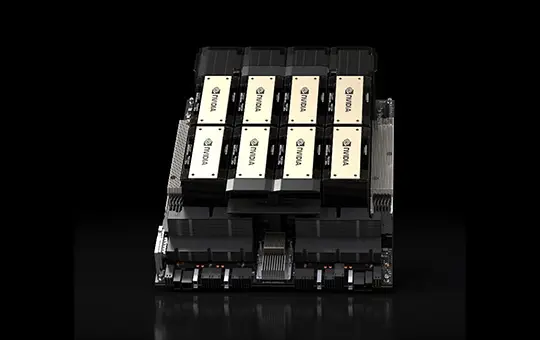

8x NVIDIA H100 Tensor Core GPUs

GPU memory

640 GB total (80 GB per GPU), 24 TB/s aggregate bandwidth

FP64 tensor core performance

67 teraFLOPS

FP32

67 teraFLOPS

NVIDIA NVSwitch

4x Fourth-generation NVIDIA NVSwitch

NVIDIA NVLink bandwidth

7.2 TB/s aggregate bidirectional bandwidth (900 GB/s per GPU)

System power usage

~10.2 kW max

CPU

Dual Intel Xeon Platinum 8480C Processors (112 cores total) or AMD EPYC equivalents

System memory

2 TB, configurable to 4 TB

Networking

4x OSFP ports serving 8x single-port NVIDIA ConnectX-7 VPI (Up to 400 Gb/s NVIDIA InfiniBand/Ethernet) 2x dual-port QSFP112 NVIDIA ConnectX-7 VPI (Up to 400 Gb/s NVIDIA InfiniBand/Ethernet)

Management network

10Gb/s onboard NIC with RJ45, 100Gb/s Ethernet NIC. Host baseboard management controller (BMC) with RJ45

Storage

OS: 2x 1.9 TB NVMe M.2, internal storage: 8x 3.84 TB NVMe U.2

Software

NVIDIA AI Enterprise (optimized AI software), NVIDIA Base Command (orchestration and cluster management), NVIDIA DGX OS / Ubuntu / Red Hat Enterprise Linux / Rocky

Rack units (RU)

8

Operating temperature

5-30°C (41-86°F)

Ideal uses cases for the NVIDIA H100 GPU

Explore uses cases for the NVIDIA H100 including AI inference, Deep learning, High-performance computing.

AI inference

Engineers and scientists across various domains can leverage the NVIDIA H100 to accelerate AI inference workloads, such as image and speech recognition. The H100's powerful Tensor Cores enable it to quickly process large amounts of data, making it perfect for real-time inference applications.

Deep learning

The NVIDIA H100 Tensor Core GPU offers a diverse range of use cases for deep learning on larger models. The H100 revolutionizes deep learning for data scientists and researchers, allowing them to handle large datasets and perform complex computations for training deep neural networks.

High-performance computing

Many diverse organizations can deploy the NVIDIA H100 GPU to accelerate high-performance computing workloads, such as scientific simulations, weather forecasting, and financial modeling. With its high memory bandwidth and powerful processing capabilities, the H100 makes it easy to run workloads at every scale.

Blog

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Browse alternative GPU solutions for your workloads

Access a wide range of performant NVIDIA and AMD GPUs to accelerate your AI, ML & HPC workloads

NVIDIA H100 PCIe

Price on request

Scale with high performance H100 GPUs on our reserved cloud.