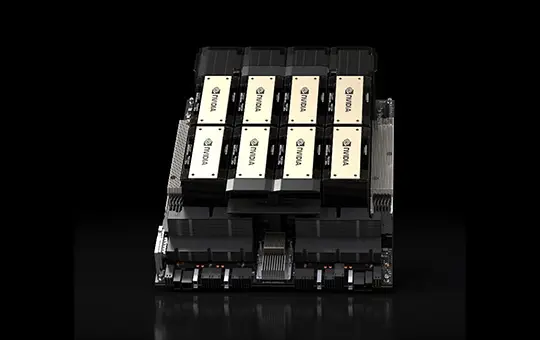

GB200 NVL72

GB200 NVL72 connects 36 Grace CPUs and 72 Blackwell GPUs in a rack-scale design. The GB200 NVL72 is a liquid-cooled, rack-scale solution that boasts a 72-GPU NVLink domain that acts as a single massive GPU and delivers 30X faster real-time trillion-parameter LLM inference.

GB200 NVL72

Infrastructure and technology partners

Perfect for a range of workloads

Deploying AI based workloads on CUDO Compute is easy and cost-effective. Follow our AI related tutorials.

Deploying rendering based workloads on CUDO Compute is easy and cost-effective.

From video editing to image generation, virtualization is ideal for your content creation needs.

Purpose-built GB200 clusters, designed and managed by CUDO

Deployed across 16 ISO-certified data centres

From 8 to 1,000+ GPUs in a single deployment

NVIDIA Quantum-X800 InfiniBand or Spectrum-X Ethernet networking

Expert rack-level design, installation, and benchmarking before handoff

24/7 monitoring, management, and engineering support

Compatible with Slurm, Kubernetes, and NVIDIA Base Command

Available at the most cost-effective pricing

Launch your AI products faster with on-demand GPUs and a global network of data center partners

Bare metal

Powered by renewable energy

No noisy neighbors

SpectrumX local networking

300Gbps external connectivity

NVMe SSD storage

Enterprise

Powerful GPU clusters

Scalable data center colocation

Large quantities of GPUs and hardware

Optimize to your requirements

Expert installation

Scale as your demand grows

Specifications

Browse specifications for the NVIDIA GB200 GPU

Starting from

Contact us for pricing

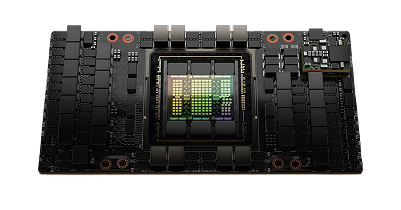

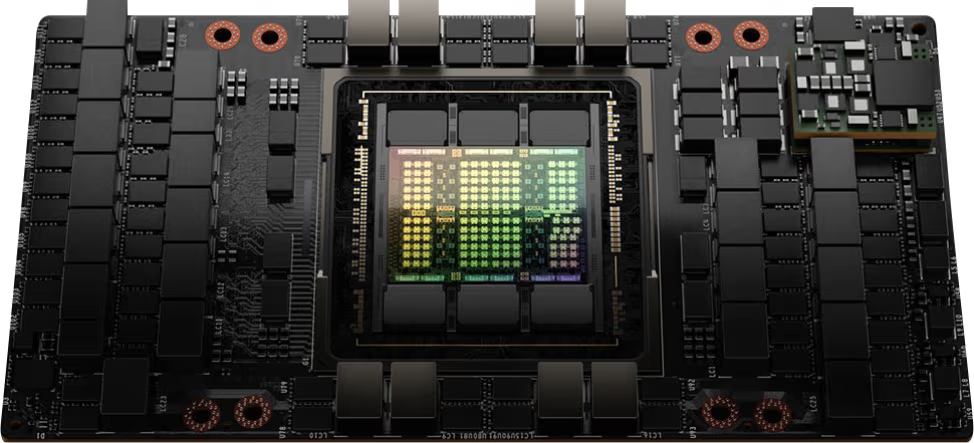

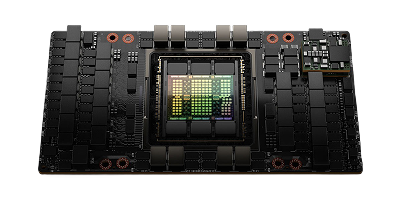

Architecture

NVIDIA Blackwell

GPU

72x NVIDIA Blackwell GPUs

GPU memory

13.5 TB total HBM3e, 576 TB/s aggregate bandwidth

FP4 tensor core performance

1,440 PFLOPS

FP8 tensor core performance

720 PFLOPS

NVIDIA NVSwitch

9x L1 NVIDIA NVLink Switches (Fifth-generation)

NVIDIA NVLink bandwidth

130 TB/s aggregate intra-rack bandwidth (1.8 TB/s per GPU, allowing all 72 GPUs to communicate as one)

System power usage

~120 kW rack TDP

CPU

36x NVIDIA Grace CPUs (2,592 Arm Neoverse V2 cores total)

System memory

Up to 17 TB LPDDR5X, up to 18.4 TB/s aggregate bandwidth

Networking

Up to 72x OSFP single-port NVIDIA ConnectX-7 or ConnectX-8 VPI (400 Gb/s to 800 Gb/s NVIDIA InfiniBand/Ethernet) Up to 18x dual-port NVIDIA BlueField-3 VPI DPUs

Management network

Host baseboard management controller (BMC) with RJ45 per tray, 2x Top-of-Rack (TOR) out-of-band management switches

Storage

Per compute tray (18 compute trays total): 8x E1.S Gen5 NVMe drive bays for internal storage, 1x M.2 NVMe SSD for OS boot

Software

NVIDIA AI Enterprise (optimized AI software), NVIDIA Mission Control (AI data center operations/orchestration), NVIDIA Base Command, Ubuntu / RHEL

Rack units (RU)

48

Operating temperature

Requires dedicated Direct-to-Chip (D2C) liquid cooling (CPUs, GPUs, and NVSwitches are liquid-cooled via an in-rack or in-row Coolant Distribution Unit, while networking modules and storage are air-cooled).

Ideal uses cases for the NVIDIA GB200 NVL72 GPU

Explore uses cases for the NVIDIA GB200 NVL72 including Supercharging next-generation AI and accelerated computing, Energy-efficient infrastructure, Massive-scale training.

Supercharging next-generation AI and accelerated computing

GB200 NVL72 introduces cutting-edge capabilities and a second-generation Transformer Engine which enables FP4 AI and when coupled with fifth-generation NVIDIA NVLink, delivers 30X faster real-time LLM inference performance for trillion-parameter language models.

Energy-efficient infrastructure

Liquid-cooled GB200 NVL72 racks reduce a data center’s carbon footprint and energy consumption. Liquid cooling increases compute density, reduces the amount of floor space used, and facilitates high-bandwidth, low-latency GPU communication with large NVLink domain architectures.

Massive-scale training

GB200 NVL72 includes a faster second-generation Transformer Engine featuring FP8 precision, enabling a remarkable 4X faster training for large language models at scale.

Blog

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Resources

- Emmanuel Ohiri

Browse alternative GPU solutions for your workloads

Access a wide range of performant NVIDIA and AMD GPUs to accelerate your AI, ML & HPC workloads

NVIDIA H100 PCIe

Price on request

Scale with high performance H100 GPUs on our reserved cloud.